- Image Recognition in AI: It's More Complicated Than You Think

- How to Train AI to Recognize Images

- What Does Image Recognition Bring to the Business Table?

- Final Words

- FAQ

Image recognition is everywhere, even if you don't give it another thought. It's there when you unlock a phone with your face or when you look for the photos of your pet in Google Photos. It can be big in life-saving applications like self-driving cars and diagnostic healthcare. But it also can be small and funny, like in that notorious photo recognition app that lets you identify wines by taking a picture of the label.

Computer vision (and, by extension, image recognition) is the go-to AI technology of our decade. MarketsandMarkets research indicates that the image recognition market will grow up to $53 billion in 2025, and it will keep growing. The scope of image recognition applications grows, as well. Ecommerce, the automotive industry, healthcare, and gaming are expected to be the biggest players in the years to come. Big data analytics and brand recognition are the major requests for AI, and this means that machines will have to learn how to better recognize people, logos, places, objects, text, and buildings.

AI-based image recognition is the essential computer vision technology that can be both the building block of a bigger project (e.g., when paired with object tracking or instant segmentation) or a stand-alone task. As the popularity and use case base for image recognition grows, we would like to tell you more about this technology, how AI image recognition works, and how it can be used in business.

Image Recognition in AI: It's More Complicated Than You Think

Artificial intelligence image recognition is the definitive part of computer vision (a broader term that includes the processes of collecting, processing, and analyzing the data). Computer vision services are crucial for teaching the machines to look at the world as humans do, and helping them reach the level of generalization and precision that we possess.

For now, we're still far from this goal, although recent years were marked with certain innovative breakthroughs in the areas of deep learning, neural networks, and sophisticated image recognition algorithms. It's just an illusion of thinking, however: machines still cannot pick up the small complex cues while simultaneously generalizing with human speed. Image recognition in AI sounds simple to people because our monkey brains are evolutionarily wired to do this task. A kid needs to see a couple of images of a cat to start recognizing cats in other images. Or, sometimes, you don't even have to show an image of something but give a clear enough description (like a horse with a horn: a child will recognize a unicorn even if it never saw this creature before).

For a machine, however, hundreds and thousands of examples are necessary to be properly trained to recognize objects, faces, or text characters. That's because the task of image recognition is actually not as simple as it seems. It consists of several different tasks (like classification, labeling, prediction, and pattern recognition) that human brains are able to perform in an instant. For this reason, neural networks work so well for AI image identification as they use a bunch of algorithms closely tied together, and the prediction made by one is the basis for the work of the other.

Given enough time for training, AI image recognition algorithms can offer pretty precise predictions that might seem like magic to those who don't work with AI or ML. Digital giants such as Google and Facebook can recognize a person at nearly 98% accuracy, which is around as good as people can tell apart faces. This level of precision is mostly due to a lot of tedious work that goes into training the ML models. This is where the processing of the data and data annotation comes in. Without the labeled data, all that intricate model-building would be for naught. But let's not get ahead of ourselves. First, let's see: how does AI image recognition work?

How to Train AI to Recognize Images

Let's say you're looking at the image of a dog. You can tell that it is, in fact, a dog; but an image recognition algorithm works differently. It will most likely say it’s 77% dog, 21% cat, and 2% donut, which is something referred to as confidence score.

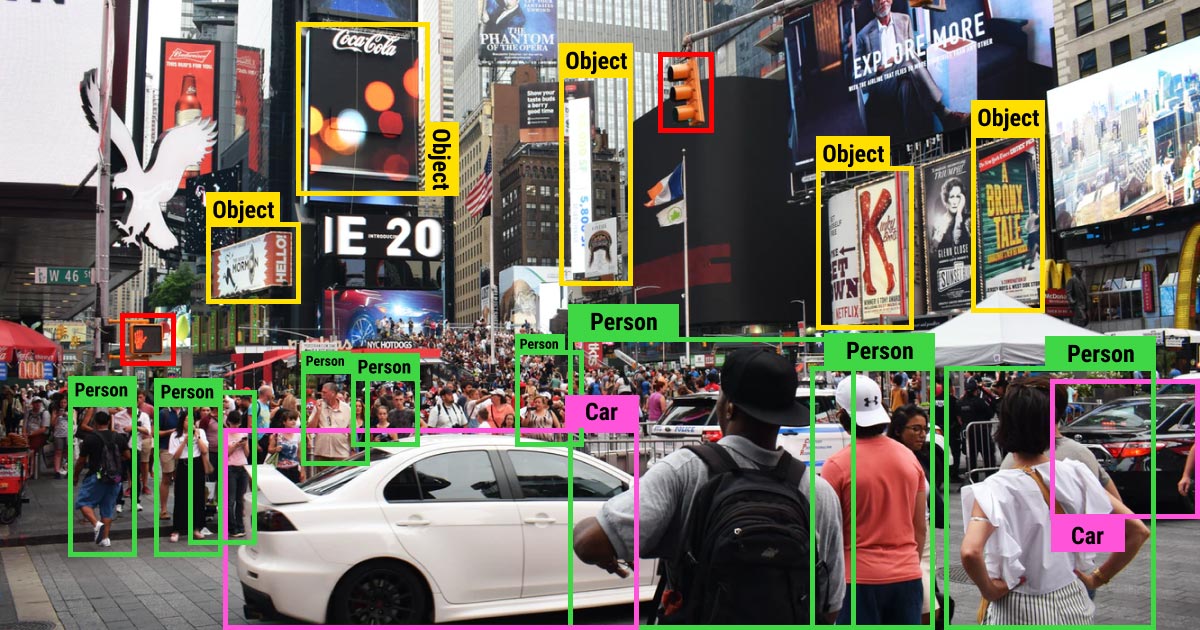

In order to make this prediction, the machine has to first understand what it sees, then compare its image analysis to the knowledge obtained from previous training and, finally, make the prediction. As you can see, the image recognition process consists of a set of tasks, each of which should be addressed when building the ML model.

Neural Networks in Artificial Intelligence Image Recognition

Unlike humans, machines see images as raster (a combination of pixels) or vector (polygon) images. This means that machines analyze the visual content differently from humans, and so they need us to tell them exactly what is going on in the image. Convolutional neural networks (CNNs) are a good choice for such image recognition tasks since they are able to explicitly explain to the machines what they ought to see. Due to their multilayered architecture, they can detect and extract complex features from the data.

We've compiled a shortlist of steps that an image goes through to become readable for the machines:

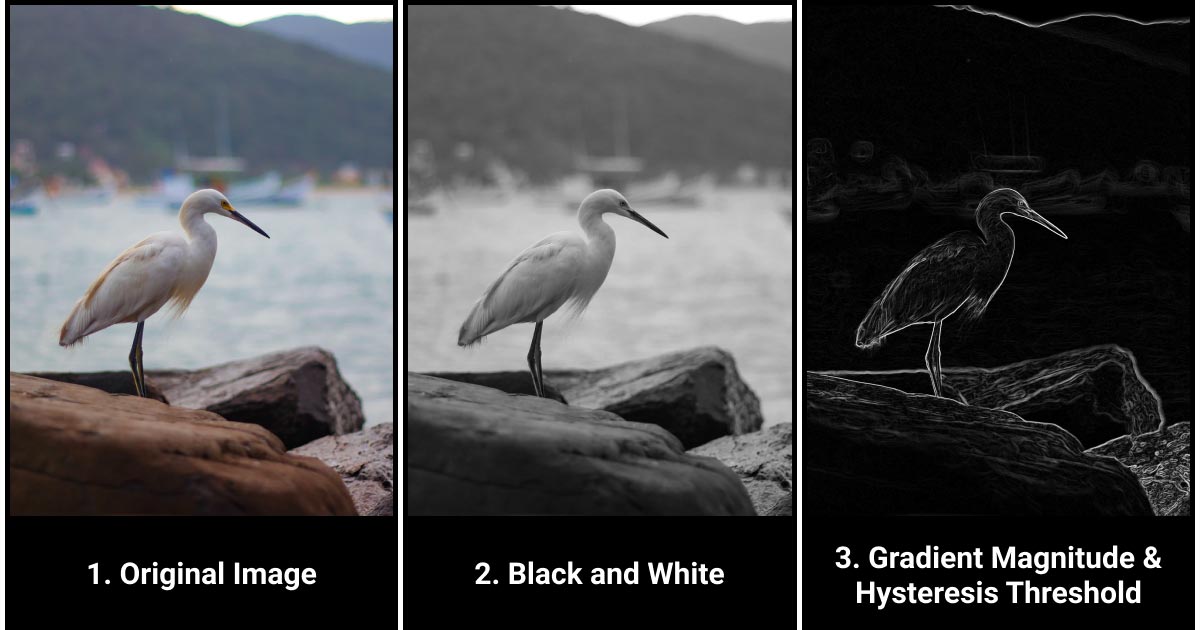

- Simplification. For a start, you have your original picture. You turn it black and white and overlay some blur. This is necessary for feature extraction, which is the process of defining the general shape of your object and ruling out the detection of smaller or irrelevant artifacts without losing the crucial information.

- Detection of meaningful edges. Then, you compute a gradient magnitude. It allows you to get the general edges of the object you are trying to detect by comparing the difference between the adjacent pixels on the image. As an output, you will get a rough silhouette of your primary object.

- Defining the outline. Next, you need to define the edges, which can be done with the help of non-maximum suppression and hysteresis thresholding. These methods reduce the edges of the object to single, most probable lines, and leave you with a simple clean-cut outline. The output geometric lines allow the algorithm to classify and recognize your object.

This is a simplified description that was adopted for the sake of clarity for the readers who do not possess the domain expertise. There are other ways to design an AI-based image recognition algorithm. However, CNNs currently represent the go-to way of building such models. In addition to the other benefits, they require very little pre-processing and essentially answer the question of how to program self-learning for AI image identification.

Annotate the Data for AI Image Recognition Models

It is a well-known fact that the bulk of human work and time resources are spent on assigning tags and labels to the data. This produces labeled data, which is the resource that your ML algorithm will use to learn the human-like vision of the world. Naturally, models that allow artificial intelligence image recognition without the labeled data exist, too. They work within unsupervised machine learning, however, there are a lot of limitations to these models. If you want a properly trained image recognition algorithm capable of complex predictions, you need to get help from experts offering image annotation services.

What data annotation in AI means in practice is that you take your dataset of several thousand images and add meaningful labels or assign a specific class to each image. Usually, enterprises that develop the software and build the ML models do not have the resources nor the time to perform this tedious and bulky work. Outsourcing is a great way to get the job done while paying only a small fraction of the cost of training an in-house labeling team.

Hardware Problems of Image Recognition in AI: Power and Storage

After designing your network architectures ready and carefully labeling your data, you can train the AI image recognition algorithm. This step is full of pitfalls that you can read about in our article on AI project stages. A separate issue that we would like to share with you deals with the computational power and storage restraints that drag out your time schedule.

Hardware limitations often represent a significant problem for the development of an image recognition algorithm. The computational resources are not limitless, and images are the heavy type of content that requires a lot of power. Besides, there's another question: how does an AI image recognition model store data?

To overcome the limitations of computational power and storage, you can work on your data to make it more lightweight. Compressing the images allows training the image recognition model with less computational power while not losing much in terms of training data quality. It also coincides with the steps that CNNs will perform for your image processing with AI. Turning images black-and-white has a similar effect: it saves storing space and computational resources without losing much of the visual data. Naturally, these are not exhaustive measures, and they need to be applied with the understanding of your goal. High quality is still the required feature for building an accurate algorithm. However, you might find enough leeway to keep the schedule and cost of your image recognition project in check.

What Does Image Recognition Bring to the Business Table?

Now that we've talked about the "how", let's look at the "why". Why is image recognition useful for your business? What are some use cases, and what is the future of this form of artificial intelligence as image recognition?

The most obvious AI image recognition examples are Google Photos or Facebook. These powerful engines are capable of analyzing just a couple of photos to recognize a person (or even a pet). Facebook offers you people you might know based on this feature. However, there are some curious e-commerce uses for this technology. For example, with the AI image recognition algorithm developed by the online retailer Boohoo, you can snap a photo of an object you like and then find a similar object on their site. This relieves the customers of the pain of looking through the myriads of options to find the thing that they want.

Facial recognition is another obvious example of image recognition in AI that doesn't require our praise. There are, of course, certain risks connected to the ability of our devices to recognize the faces of their master. Image recognition also promotes brand recognition as the models learn to identify logos. A single photo allows searching without typing, which seems to be an increasingly growing trend. Detecting text is yet another side to this beautiful technology, as it opens up quite a few opportunities (thanks to expertly handled NLP services) for those who look into the future.

Here's one more fascinating among numerous AI image recognition examples: let's say you are in a restaurant with your colleagues. The bill arrives, and you start to type in numbers to split it fairly. That can be quite frustrating after a fine meal; instead, you could download an app that would read every position and let you split the bill automatically. Isn't AI great? And while we're talking about machines reading text, we should not forget about automation. Actually, we've dedicated a whole two-parter to the topic of automated data collection and OCR, so don't forget to visit to learn more!

Final Words

There's a reason why AI and image recognition became the essential technology of our time: it has the potential for the future for a variety of industries. In manufacturing, recognition of defects is seen as one of the most significant steps that can save a lot of money for the businesses. Insurance companies are starting to use AI image recognition technology to personalize their approach to customers by evaluating how they drive or care for their homes.

Fashion brands develop applications that digitize the shopping world: these allow shoppers to make considerate decisions via augmented reality. Experts talk about revolutionizing video gaming and directing it outwards and away from the devices by tracking human bodies as they move in real-time. Autonomous vehicles are now closer than ever to becoming mainstream. None of these projects are possible without image recognition technology. And we are sure that, if you're interested in AI, you can surely find a great use case in image recognition for your business.

FAQ

How to use an AI image identifier to streamline your image recognition tasks?

To use an AI image identifier, simply upload or input an image, and the AI system will analyze and identify objects, patterns, or elements within the image, providing you with accurate labels or descriptions for easy recognition and categorization.

What exactly is AI image recognition technology, and how does it work to identify objects and patterns in images?

Together, AI and image recognition technology form a computer-based system that uses advanced algorithms to analyze and interpret visual content in images by recognizing and categorizing objects and patterns based on previously learned patterns and features during training.

How does AI identify images?

Image recognition algorithms use deep learning datasets to distinguish patterns in images. More specifically, AI identifies images with the help of a trained deep learning model, which processes image data through layers of interconnected nodes, learning to recognize patterns and features to make accurate classifications. This way, you can use AI for picture analysis by training it on a dataset consisting of a sufficient amount of professionally tagged images.

Table of Contents

Get Notified ⤵

Receive weekly email each time we publish something new: