New business opportunities are hard to find. The companies are constantly seeking competitive advantages, and artificial intelligence solutions are at the top of the priority list. They help to automate business processes and facilitate decision-making. But machine learning needs fuel to work on, and this fuel is labeled data.

We dedicated the last two articles to understanding labeled and unlabeled data, why and how to use both types. Now, let's see how the data is annotated and what you should do before the labeling starts. Here are a few essential questions we'll answer this time:

- Do you need data annotation for building your AI and why?

- What are labels in machine learning?

- How to label data for machine learning?

- What is the role of a data labeler in the annotation process?

- What to do with your data before the annotation?

Why Is Data Annotation Important for ML and AI?

There is scarcely a field that wouldn't benefit from adopting AI. We carry it in our pockets and let it drive our cars. It helps develop effective business and marketing strategies, as well as win in human games. And nearly all of it is done with the help of data labeling.

As we’ve discussed in our article about data annotation market trends this year, the market’s value stood at $1.3 billion as of the close of 2022, and it’s expected to soar to $5.3 billion by the year 2030. This is not a surprising trend: the ML professionals say any product and service will include AI in one way or another in just a few short years. What makes data annotation in machine learning so valuable?

We see unlabeled data all around us. Thousands of emails in your business account, hundreds of photos of your products, dozens of presentation videos and recordings of speeches. But the majority of algorithms used today need labeled data to learn, so processing the raw data that you have is still the most feasible alternative for training machine learning models.

Labeled data in machine learning is much more valuable because it provides an accurate estimation of the conditions of our world. It shows understandable patterns and tells the machine what to look for. This helps with advanced classification with localization and building complex forecasting models. After the ML algorithm is trained, it is able to find similar patterns in the new datasets that you feed into it.

Limitations of Data Annotation in ML

Still, labeling data is not only the engine that powers machine learning but also a great limitation in training AI. Experts point out that data annotation might be the single most constraining factor in machine learning. Why? There are two major reasons for this.

First, it might be hard to get vast sets of data in highly specialized fields. And even if you get the data, it can be untrustworthy or faulty in any of a number of ways. Second, data annotation itself is an expensive and time-consuming process. It requires the work of human experts who will manually add tags and labels to each piece of your data. Again, in those highly specialized areas, you might need professionals to do the job (like tagging tumors on X-ray images, hardly a task that an unprepared annotator can perform).

On one hand, these shortcomings led to decreasing investments and the adoption of 'wait-and-see' attitudes. On the other hand, they also encouraged the search for other options. While labeled data is used in supervised learning models, semi-supervised and unsupervised algorithms rely little (or not at all) on the annotation process. Reinforcement learning and Generative Adversarial Networks also offer promising scenarios.

However, despite the alternatives, data annotation is still at the core of most machine learning models. It is trustworthy and straightforward, proven time and time again. At Label Your Data, we believe that data labeling is the most powerful tool at your disposal, and the machines still need human experts to learn.

How to Label Data for Machine Learning?

Let's start with the basics and answer this question: what is label in machine learning? A label or a tag is simply an identifying element that explains what a piece of data is. For an image, this might be telling a model that there is a person or a tree. For an audio recording, an annotator writes the words that are being said.

The labels let the ML model learn by example. You don't explain what a car is. Instead, when the model has seen enough examples of photos with a car on it, it will be able to spot a car on a photo without labels. A similar mechanism works in other cases: e.g., with enough training on the datasets of emails with labels 'spam' or 'not spam', the model will learn to tell the two types apart and put them in different folders.

Fields of AI

Data annotation in AI can be used for any type of data: images, videos, audio, and text. At LYD, we offer annotation services in two major fields of AI: Computer Vision (CV) that mostly involves image and video labeling use cases, and NLP data labeling (Natural Language Processing), which focuses on texts with addition of audio data. Let's take a brief look at what both of these fields can offer you.

Computer Vision

A picture is worth a thousand words. That's one reason why people are keen to teach the machines to understand the visual content. We love our images and videos because it's easy for humans to understand what we see. However, computers don't share this feature with us. In order for an AI to see the world how we see it, it's necessary to show the machine thousands of examples and let it learn with the help of computer vision services. That's why human annotators use such types of annotation as bounding boxes and polygons to identify the object, show its shape and track its position in space.

Natural Language Processing

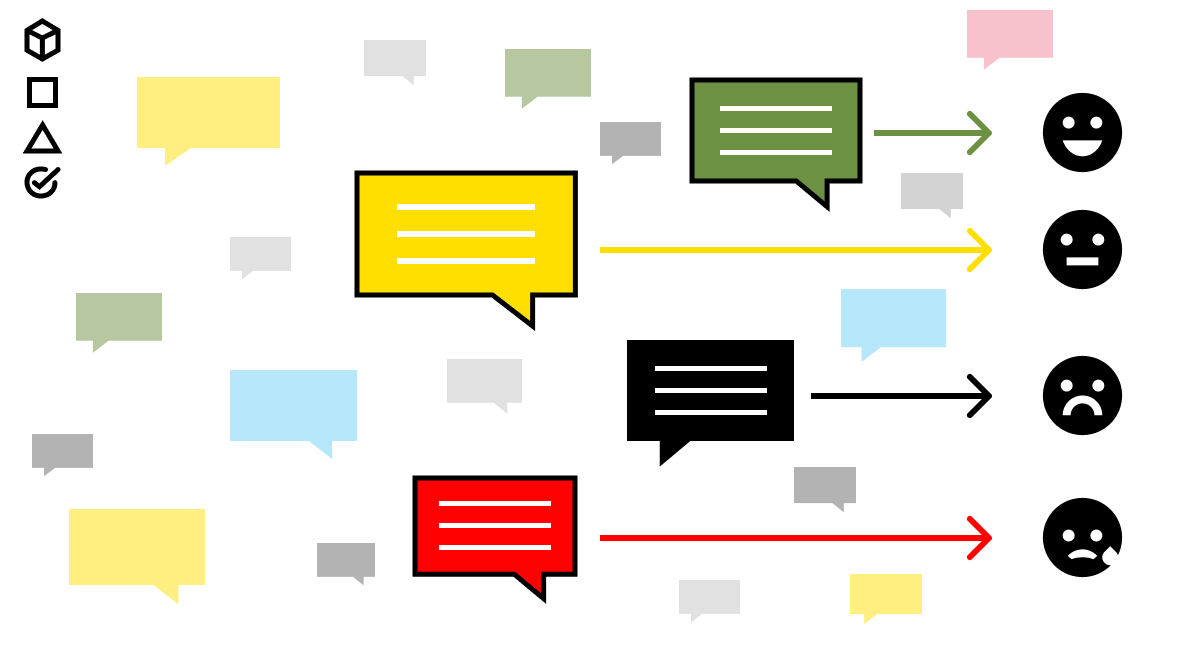

As the name suggests, this field of AI aims to explain the language of people to the machines. People communicate in their naturally developed languages (as opposed to the artificial computer languages). Through text or audio recordings, machines learn to identify the variety of meanings through expert NLP services. Audio-to-text transcription is an easy example: you take a recording of a speech and turn it into text. Intent and sentiment analysis is a more complicated type of annotation. It relies on the machine determining what mood the text has and what purpose the author of the text pursues.

[Fun fact: the volume of text information is much larger than the amount of image/video data. Despite this, CV is a more popular field for both developers and users. And it's only natural: CV is visual and spectacular, while NLP requires hard work and is at times more complicated and tedious in terms of the labeling process.]So What Does a Data Labeler Do?

Now you understand what types of data can be labeled and how to label data for machine learning. In this complex process of data annotation, the leading role is played by a data labeler, a human professional who manually arranges and labels your data by adding tags to each piece.

The role of a human labeler cannot be overlooked. As we've mentioned before, labeled datasets are used in supervised machine learning models. The name indicates that there is human supervision that helps the machine to get through the training process. Why human supervision? Because there are tasks that are easy for us but hard for the machines. For example, facial recognition is a simple task that even our babies can do. But for the computers to discern between different people, a lot of training has to be done. And that's why we need human annotators.

Are There Better Options Than Human Data Labelers?

Obviously, working with people means there's a human factor that notoriously brings disaccord. Yes, we have flexible and competent minds to expertly label data for machine learning. But people are not only rainbows and unicorns; there are disadvantages to consider.

| Advantages of human annotators | Disadvantages of human annotators |

|

Quality Human mind is not a rigid structure like a machine algorithm. It can spot a faulty element with ease or overcome an obstacle that will ruin the most intricate ML model. Human labelers offer precision and accuracy that is crucial for a data annotation project. |

Risk of mistakes People can and will make mistakes; that's one of the mechanisms that allows us to learn and improve. To avoid mistakes in data annotation, it is crucial to include a round of QA. At LYD, we sometimes have a couple of rounds just to make sure we didn't miss anything. |

|

Flexibility and customization Human annotators can adapt to the changing conditions of a task and accept modifications on the go. This flexibility allows building state-of-the-art annotation projects that correspond to your specific needs. |

Limited volume The benefit that automated data labeling tools have over human annotators is certainly the volume of a dataset. With a GAN, you can have a lot of new data generated over the course of several days. That is, in case you have the computing power ready for the task. |

|

Cost Human labor is expensive. This is especially true if you're building an in-house annotation team. On the other hand, consider the amount of computing power that a no-human labeling tool requires. Besides, outsourcing or crowdsourcing an annotation project will cost less, which is great news if you don't have a need for a permanent labeling team. |

Time-consuming and labor-intensive This is one of the primary constraints of data annotation that relies on human experts. Labeling big datasets takes time and effort. This is also one of the major factors why outsourcing an annotation project is so popular today: you pass the tedious task to someone else and focus on strategic goals instead. |

Human annotators have their drawbacks. And you have a choice of automated annotation tools: a machine can do the labeling job instead of expensive human resources. However, these tools yet need to be developed to the point where they offer more advantages compared to human labelers. For now, the quality, flexibility, and cost offered by human labelers is a more effective solution. And you can avoid a lot of stress on your team and budget if you outsource or crowdsource your project to a label center. Read our article on building an in-house team vs. outsourcing if you want to make a weighted decision.

Prior to Annotation: Make Your Data Work for You

You've set your goals, collected the data and decided to build a team or outsource the annotation project. But there's still more you can do to make your data more effective.

In a way that labeled data feeds supervised learning models, unlabeled data can be used for unsupervised machine learning. The good news is, at this stage, you don't need labels at all. Even better news: these models allow you to preprocess your data. Clustering groups similar pieces of data together. Dimensionality reduction simplifies your dataset in accordance with your strategic goal. There is literally no excuse not to use unsupervised machine learning algorithms before labelling data for machine learning. Read more about how and why to use unlabeled data.

Besides, you can also combine the labeled and unlabeled data in a semi-supervised learning model. This will significantly reduce the required volume of manually labeled data while still giving you the big annotated dataset you need. For example, active learning is one way to ensure you use as little labeled pieces as possible. We will further elaborate on the models of semi-supervised machine learning; it's an exciting and useful topic! Subscribe to our emails to stay tuned.

How Much Training Data Does an ML Algorithm Need?

Here’s a good question that everybody wants to be answered. The truth is, there is no single answer. The required volume of your training set depends on a number of factors:

- Your strategic goal

- The sophistication of your AI model

- The difficulty of the task

- The complexity of your data

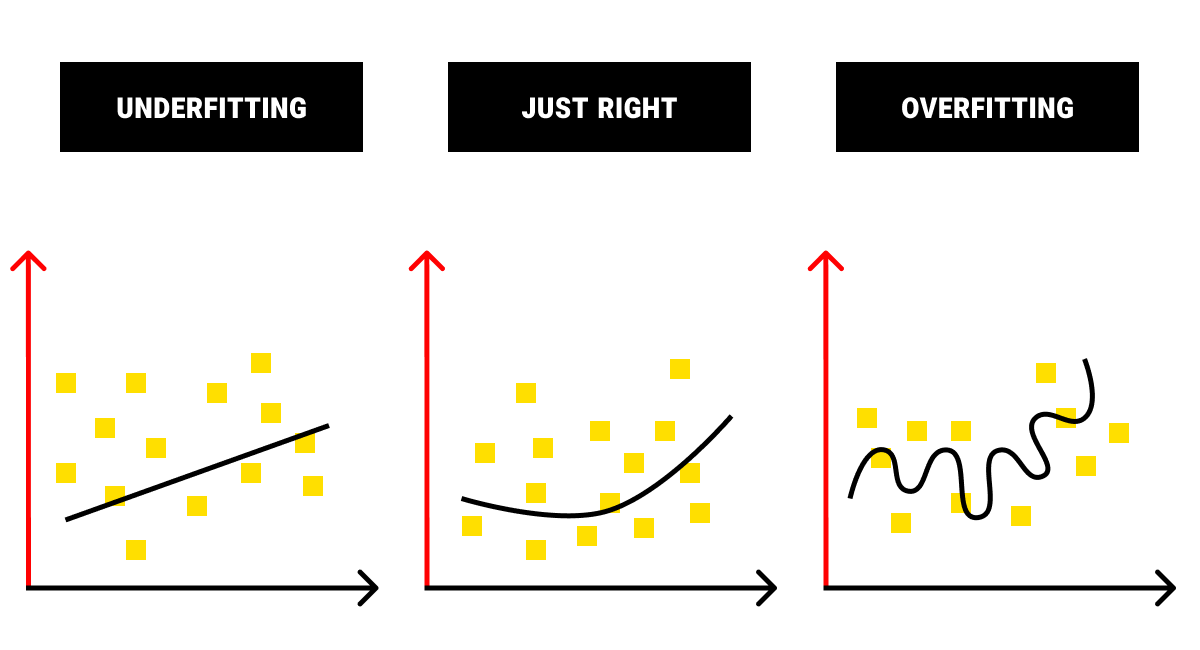

Here's how one of our annotation experts put it when we asked how much training data is required for an ML algorithm: the more data the better, but not more than necessary. Too little training data, and your model will be making predictions with significant error margins. Make the training dataset too big, and your algorithm will take ages to learn.

So how to understand how much is enough? Usually, an experienced AI professional knows how much data is needed for a project. In other cases, the necessary volume is defined in empirical ways. It's often a path full of trials and mistakes; don't be afraid to step on it.

In Summary: What You Really Need to Know about Data Labeling in ML

AI solutions are everywhere we look, from our phones to robots that clean our houses to business tools that facilitate our decision-making. Data labeling as an industry has been steadily growing together with the development of AI projects. We've discussed a few important points of data labeling in the article but, in case you've found it too long to read, here are the key takeaway points:

- In machine learning, a label is added by human annotators to explain a piece of data to the computer. This process is known as data annotation and is necessary to show the human understanding of the real world to the machines.

- Data labeling tools and providers of annotation services are an integral part of a modern AI project. Data annotation is time-consuming, labor-intensive, and expensive, which sets a lot of limitations on machine learning. Despite this, there is still no better way to train your model than with the help of labeled data.

- Data annotation can be used for any type of data: image, video, audio, and text. Computer Vision projects cover image and video data and help to show the machine how people see the world. Natural Language Processing aims to explain human communication to the computer and works with the data in the form of audio and text.

- Human experts play the critical role in the process of data annotation, since they provide supervision over the training process of an ML model. It is possible to use automated tools instead of human experts. Still, the benefits that annotators provide (quality, flexibility, and cost) cover the potential drawbacks (risk of mistakes, limited volume, and time consumption).

- Before you begin to label data for machine learning, you can make it work for you by the ways of preprocessing. There are many additional services that data annotation companies offer to prepare your data for the labeling stage. Unsupervised ML allows you to structure and cluster the data. Semi-supervised ML models can significantly decrease the required volume of labeled data.

- To make the most out of your resources, you should know how much training data you need. Having either too little or too much of it will have a degrading effect on your AI project. Remember that determining the volume of training data can be a complicated process of trial-and-error.

Now you have an understanding of the ML data labeling process, what features and elements it has, and what steps you can take before labeling your dataset. If you're ready to annotate the data, we'll be glad to offer you the best service, where quality meets security and speed. Let us know what your AI project is, and we will help!

FAQ

What is data labeling in AI?

Data labeling in AI is the process of adding descriptive tags or annotations to raw data. This crucial step is essential for training machine learning models to understand and interpret that data accurately. In the context of labeling approaches, the choice of the most suitable strategy, whether it’s supervised, active learning, or leveraging transfer learning, directly impacts the efficiency and performance of the AI model being developed.

What are the labels in a dataset?

Labels in a dataset refer to the predefined tags or annotations assigned to the data. So the labeled data meaning in machine learning is essential for training models to recognize patterns and make predictions based on the provided labels.

What are labels in unsupervised learning?

In unsupervised learning, labels are absent, and the algorithm identifies inherent patterns or structures in the data without predefined categorization. We can say that, in this case, these are algorithmically generated labels.

Table of Contents

Get Notified ⤵

Receive weekly email each time we publish something new: