Video Annotation: Workflow Tips for Better Datasets

Table of Contents

- TL;DR

- Why Video Annotation Needs a Workflow

- Setting Clear Objectives Before Video Annotation

- Preparing Data for Video Annotation

- Video Annotation Tools: What to Look For

- Preparing a Labeling Guide That Annotators Will Follow

- Speeding Up Video Annotation with Automation

- Keeping Your Annotation Team Aligned

- Adding Quality Checks Without Slowing Down

- Integrating Video Annotation into Your ML Workflow

- When to Outsource Your Video Annotation Tasks

- About Label Your Data

- FAQ

TL;DR

- You need efficient video annotation if you’re training high-performing machine learning models.

- Videos require precise temporal and spatial labeling, which makes it essential to optimize your workflows.

- You can improve the process by setting clear goals, using the right annotation tools, and maintaining a structured quality control process.

Why Video Annotation Needs a Workflow

Teaching AI to do anything works better when you adopt a structured approach. If your machine learning dataset is chaotic, your application will never live up to its full potential. More importantly, setting up a clear video annotation workflow streamlines the process. It also makes it easier to replicate your successes.

Why Video is Harder Than Images

Video is harder than image annotation because of the temporal dependencies, high frame count, and motion blur and occlusions.

Unlike images, video frames are interrelated. You have to ensure image recognition and continuity across frames. This goes beyond mere semantic segmentation because you have to account for the movement of objects as well.

A single video can contain thousands of frames, making manual annotation time-consuming. Imagine applying panoptic segmentation to each frame. It would take ages. Besides, objects may be partially visible, requiring careful labeling to avoid missing data.

Where Most ML Teams Lose Time

Without a structured workflow, even image annotation services often struggle with:

- Frame-by-frame annotation: Manual labeling without interpolation or tracking.

- Label drift: Inconsistent standards from poor documentation.

- Redundant effort: Over-annotating or mislabeling leads to costly rework.

What a Strong Workflow Can Fix

With a structured approach, you can:

- Reduce annotation time using automation and interpolation.

- Improve accuracy by providing clear annotation guidelines.

- Enhance scalability by making sure you consistently label objects across teams.

Setting Clear Objectives Before Video Annotation

Every journey starts with a clear destination in mind. You don’t just hop in your car and drive aimlessly across the country, so why expect a machine learning algorithm to operate in a similar way?

Define the Goal of Your ML Model

Before data annotation, you must define your:

- Model requirements: Is the task object detection, activity recognition, or instance segmentation?

- Annotation priorities: You should prioritize key objects for labeling and ignore irrelevant details.

Choosing the Right Video Annotation Type

It’s essential to choose the best method for your needs. This is the best way to improve your model’s accuracy:

- Bounding boxes: Useful for object detection but not always ideal for precise segmentation.

- Polygons: Capture irregular object shapes and are ideal for instance segmentation.

- Keypoints: You can use these for pose estimation and motion tracking.

- Cuboids: Essential for 3D object tracking, particularly in autonomous driving.

Avoiding Over-Labeling or Vague Categories

Naturally you want to balance accuracy and time spent:

- Maintain granularity balance: Over-labeling increases annotation time without significant performance gains.

- Create clear category definitions: You must avoid ambiguous labels that may confuse annotators. For example, is it a cat kitty, or kitten?

Preparing Data for Video Annotation

Organizing your raw video data before labeling can save hours of work later.

Breaking Long Files Into Logical Clips

Here’s how you can streamline the process:

- Scene-based segmentation: You can divide your videos based on scene changes to improve annotation video efficiency.

- Handling continuous streams: For real-time video, like security footage clip-based segmentation helps focus on key events.

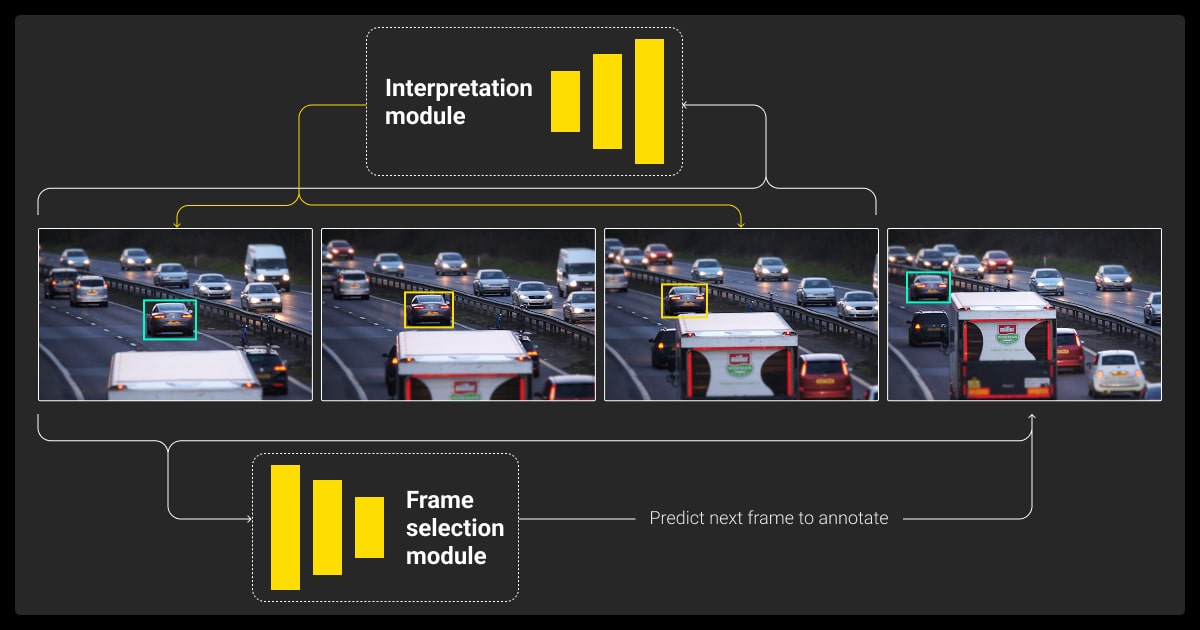

Using Smart Frame Sampling in Video Annotation

- FPS settings: It’s inefficient to annotate every frame. Instead, you can use keyframes or uniform sampling.

- Scene cuts: You can use motion-based frame selection to minimize redundant labeling.

Keeping Naming and Metadata Consistent

Use standardized file structures to prevent confusion across datasets. You can also include metadata like frame timestamps and scene IDs help maintain your dataset’s integrity.

Breaking down longer videos into 2–3 minute segments and using automated interpolation between keyframes helps us maintain both speed and accuracy. It also makes it easier to manage large projects and keep work consistent across teams.

Video Annotation Tools: What to Look For

The right tools can make or break your video annotation workflow.

Must-Have Features in Video Annotation Tools

- Object tracking to propagate labels across frames.

- Interpolation for smoother transitions between manually labeled frames.

- Frame control to navigate large videos efficiently.

User Experience Matters for Speed

Look for AI video annotation tools that:

- Minimize clicks to reduce annotator fatigue.

- Has keyboard shortcuts improve efficiency.

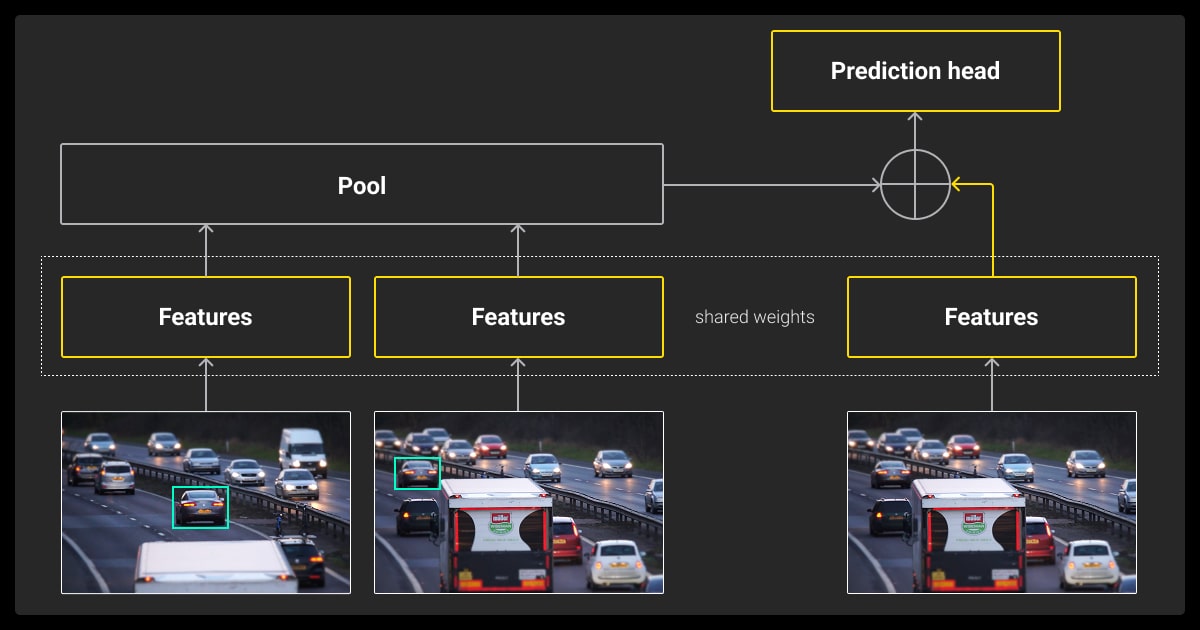

Active learning can reduce labeling time by up to 50%. Annotators only correct errors or label uncertain cases instead of manually labeling every frame, which keeps the focus on the hardest examples. It’s a smart way to scale without compromising on quality.

Comparison of Top Tools for Video Annotation

Here’s a breakdown of the top video annotation platforms based on their key features and capabilities:

Label Your Data

Best for: End-to-end video annotation with team collaboration and no minimum commitment

- Upload data, choose annotation types, and download results—no minimum commitment.

- Supports rectangles, polygons, cuboids, keypoints, and segmentation formats.

- Includes cost calculator, instruction generator, free 10-frame pilot, and real-time tracking.

- Offers API integration and team-based permissions for seamless collaboration.

- Ideal for ML teams, researchers, and startups seeking reliable, customizable workflows.

V7

Best for: AI-assisted annotation with real-time collaboration

- Integrates machine learning models to pre-label video data, reducing manual annotation time.

- Offers an intuitive, customizable interface suitable for various industries, including healthcare, robotics, and automotive.

- Supports real-time collaboration with progress tracking, task assignment, and centralized quality control.

- Ideal for teams looking for flexible and automated video annotation workflows.

SuperAnnotate

Best for: Comprehensive annotation with advanced tracking and segmentation

- Supports multiple annotation types, including object tracking, action detection, pose estimation, and lane detection.

- Features automation tools like Autotrack for object movement prediction and interpolation for faster annotation across frames.

- Enables real-time collaboration, feedback loops, and task management for large teams.

- Works well for industries requiring high-detail annotations, such as autonomous vehicles and surveillance.

Amazon SageMaker Ground Truth

Best for: Large-scale annotation projects with AWS integration

- Provides automated and manual video annotation tasks, including clip classification, object detection, and object tracking.

- Uses machine learning to pre-label data, speeding up the annotation process.

- Offers deep integration with Amazon S3 and SageMaker, streamlining data storage, annotation, and model training.

- Suitable for teams working within the AWS ecosystem who need scalable annotation solutions.

Encord

Best for: AI-assisted video annotation with specialized tracking tools

- Supports AI-assisted annotation to streamline the labeling process across large datasets.

- Handles various video formats, making it flexible for different industries.

- Designed to support detailed tracking requirements, making it useful for AI-driven video analytics and motion studies.

CVAT (Computer Vision Annotation Tool)

Best for: Open-source, customizable annotation for computer vision tasks

- This is free video annotation software and open-source, making it highly customizable for specific project needs.

- Supports a range of annotation types, including bounding boxes, polygons, and keypoints.

- Allows manual and semi-automated annotation with interpolation features to reduce workload.

- A strong choice for teams that need full control over their annotation workflow without vendor lock-in.

Preparing a Labeling Guide That Annotators Will Follow

Data annotation services create clear guidelines so that their teams know exactly what they need to do. Here’s how to do that.

Defining Classes, Edge Cases, and Label Boundaries

When it comes to video annotation for machine learning, you must reduce any chance of misinterpretations. You do this by providing:

- Precise class definitions prevent misclassification.

- Edge case documentation reduces annotation inconsistencies.

Using Visual Examples Over Long Documents

Think of it this way, how many times do you read through all the instructions? Keep your list shorter by using images over long text documents:

- Quick-reference sheets with labeled examples speed up training.

- Video-based annotation tutorials improve annotator understanding.

Updating Guidelines Based on Mistakes

Live video annotation is dynamic, so you may need to update your guidelines over time:

- Work in feedback loops ensure annotation quality evolves over time.

- Conduct periodic audits to help refine annotation criteria.

Speeding Up Video Annotation with Automation

You can use video annotation software to speed things up.

When Object Tracking Actually Saves Time

- Multi-frame object tracking reduces redundant work.

- Post-tracking correction ensures annotation accuracy.

Interpolation: Time-Saver or Accuracy Risk?

You have to consider both sides of the equation.

- Advantage: Reduces manual effort by auto-generating annotations between key frames.

- Risk: Can introduce inaccuracies if not verified properly.

Semi-Automated Video Annotation for Repetitive Tasks

- AI-assisted annotation speeds up labeling of frequently appearing objects.

- Human validation ensures AI-generated labels are correct.

Utilizing pre-trained AI models for initial annotation, followed by human verification, reduced our annotation time by 60%. We implemented CVAT with custom hotkeys and automated quality checks to streamline daily workflows. This setup helped us maintain high accuracy even while processing thousands of frames.

Keeping Your Annotation Team Aligned

Make sure your video annotation machine learning is on track by keeping your team on the same page.

Training with Real Project Data

You should simulate real-world scenarios to prevent surprises during deployment.

Reviewing Early Work Before Scaling

Take a lesson from data collection services. You can spot-check initial annotations helps refine guidelines.

Encouraging Questions and Feedback Loops

Good communication is the key to success here. Make sure your team can speak to each other in real-time using:

- Slack or forums for annotators improve communication.

- Regular check-ins help resolve ambiguities.

Adding Quality Checks Without Slowing Down

Quality control doesn't have to slow you down — if you do it right.

Using Lightweight Review Checklists

Simple validation criteria reduce unnecessary reviews.

Measuring Inter-Annotator Agreement

Consensus-based validation ensures consistency across annotators.

When to Spot-Check vs. Review Everything

- Critical annotations require full review.

- Lower-priority labels can be spot-checked.

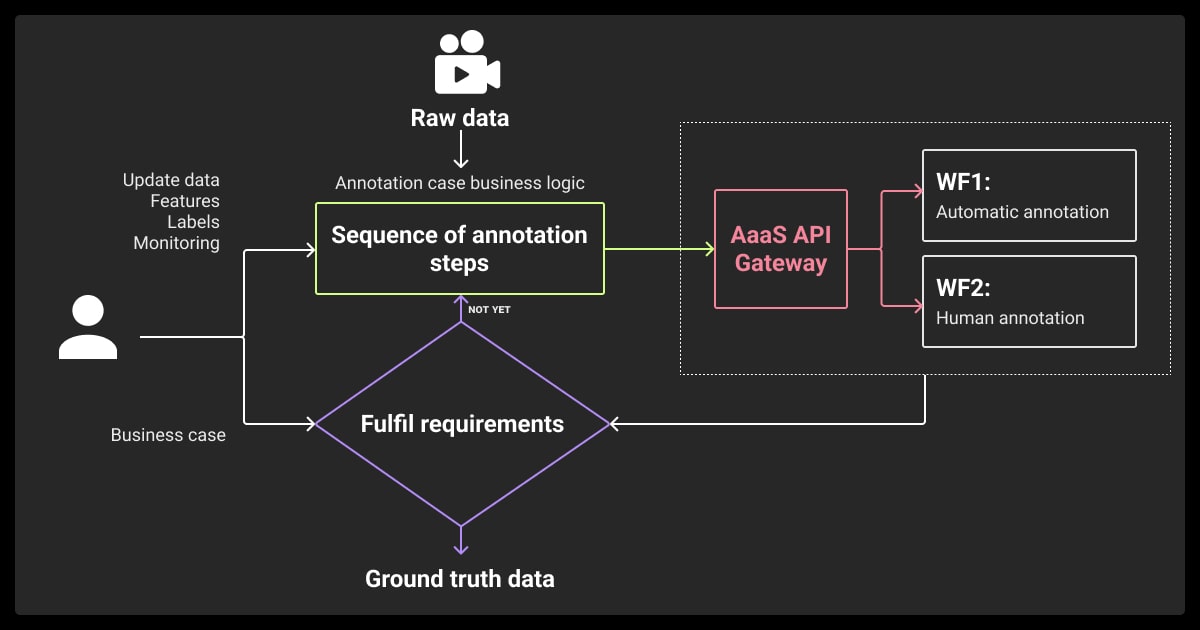

Integrating Video Annotation into Your ML Workflow

To get the best results, your annotations need to fit neatly into your training pipeline.

Choose an export format that matches your model's requirements. COCO, Pascal VOC, and YOLO are all commonly supported by modern computer vision frameworks, so be sure to select the one that aligns with your training pipeline.

Use model results to identify labeling issues. By running a model-assisted review, you can flag mislabeled or low-confidence annotations, helping you clean up your dataset before retraining.

Build a feedback loop between your model and annotation team. When annotators get updates based on model performance, they can refine future annotations, improving accuracy over time.

When to Outsource Your Video Annotation Tasks

Should you call in a video annotation company? It’s worth looking at it if your project is falling behind. You’ll be pleasantly surprised at how affordable data annotation pricing.

One clear sign that it’s time to look into video annotation outsourcing is when your in-house team is stretched. If your team’s missing deadlines or there’s an annotation backlog, external support can help you catch up without compromising quality.

Before choosing a video annotation service, make sure to ask the right questions:

- How do they ensure labeling accuracy through quality control?

- What security policies are in place to protect sensitive video data?

Even when outsourcing, you can maintain control and visibility. Periodic audits and sample reviews help ensure the outsourced work meets your standards and project requirements.

We hope this guide gave you the answers you need. If not, reach out to our team — we’re happy to help.

About Label Your Data

If you choose to delegate data annotation, run a free data pilot with Label Your Data. Our outsourcing strategy has helped many companies scale their ML projects. Here’s why:

Check our performance based on a free trial

Pay per labeled object or per annotation hour

Working with every annotation tool, even your custom tools

Work with a data-certified vendor: PCI DSS Level 1, ISO:2700, GDPR, CCPA

FAQ

What is video annotation?

Video annotation is where we add metadata like bounding boxes, labels, and keypoints to video frames. This process tells AI what it’s looking at so you can train your model.

How can I annotate a video?

You can label each object manually or semi-automatically using a video annotation tool. If you go this route, it’s important to find the right video annotation platform for your needs. You can also hire video annotation services if your team can’t meet the project demands.

What is an example of an annotation?

A bounding box around a moving car in a self-driving dataset is an example of video segmentation, which is part of video annotation. This helps the model track the car’s position and movement across frames.

What is visual annotation?

Visual annotation helps AI understand the contents of an image or a video. You mark objects, actions, and regions. Video data annotation falls under this category.

Written by

Karyna is the CEO of Label Your Data, a company specializing in data labeling solutions for machine learning projects. With a strong background in machine learning, she frequently collaborates with editors to share her expertise through articles, whitepapers, and presentations.