Ground Truth Data: What It Is and How to Build It Right

Table of Contents

- TL;DR

- What Is Ground Truth Data in Machine Learning?

- Ground Truth Data vs Training Data

- How Ground Truth Data Shapes Model Performance

- How to Build Reliable Ground Truth Data

- Where Is Ground Truth Data Used in Machine Learning?

- Common Challenges When Building Ground Truth

- Best Practices for High-Quality Ground Truth Data for AI

- Making Ground Truth Data Work for Production AI

- About Label Your Data

- FAQ

TL;DR

- Ground truth data is the verified reference that tells you whether your model's output is correct.

- Its quality matters more than its size because even strong algorithms fail when labels are unreliable.

- Building trustworthy ground truth requires clear guidelines, domain expertise, consistent quality checks, and a commitment to updating labels as conditions change.

As AI adoption accelerates, data quality has become the most common point of failure.

Studies show that up to 70-85% of AI project failures trace back to data-related issues, with unreliable labels as a primary culprit. Yet many teams still underinvest in the one asset that determines whether a model can be trusted: ground truth data.

Ground truth is essential in computer vision, autonomous systems, NLP, and applied analytics, where weak labels or unreliable reference data can quietly damage results. If the ground truth is flawed, anything built on top of it becomes less trustworthy.

Yet creating strong ground truth is rarely easy. It requires clear labeling rules, domain expertise, consistency checks, and regular updates.

This article explains what ground truth data means, where it is used, how data annotation quality affects it, and the practices that help teams collect and maintain it well.

What Is Ground Truth Data in Machine Learning?

Ground truth data is the verified reference used to evaluate whether a machine learning model or system is correct. It is the confirmed label attached to a raw input, such as a medical scan labeled “malignant” by a specialist, a LiDAR frame with annotated pedestrians, or a support ticket tagged with the correct intent.

This distinction between raw data and ground truth data meaning matters because models learn from labeled data, not inputs alone.

But ground truth is not always absolute. It depends on context, task definitions, and the labeling standards a team applies. Two qualified annotators can reasonably disagree on an edge case, and what counts as “correct” may shift as guidelines evolve or new scenarios emerge.

In computer vision, ground truth may involve bounding boxes or pixel-level segmentation masks. In NLP, it could be sentiment analysis labels or named entity spans. In healthcare, it often comes from expert diagnoses or lab-confirmed results.

Despite its name, ground truth represents the most reliable reference available for a given task, and its quality directly impacts how well an AI system performs and can be trusted.

Ground Truth Data vs Training Data

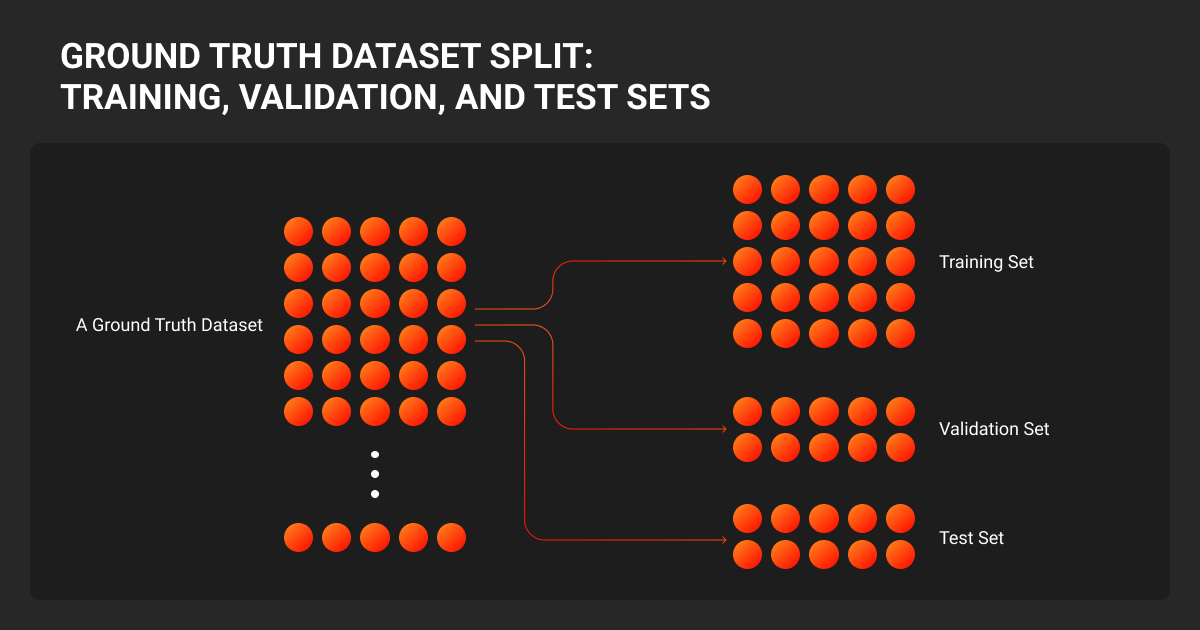

Although closely related, ground truth data and training data serve different roles in machine learning. Understanding this distinction helps in both model development and evaluation.

| Aspect | Training Data | Ground Truth Data |

| Definition | Data used to train the model to learn patterns | Verified correct labels or outcomes used as a benchmark |

| Purpose | Helps the model learn relationships in data | Defines what “correct” looks like |

| Quality Requirement | Can include noisy, inferred, or weak labels | Must be highly accurate and reliable |

| Role in ML Lifecycle | Primarily used during training | Used in both training (sometimes) and evaluation |

| Reliability | May contain inconsistencies or outdated labels | Considered the most trusted reference |

| Usage in Evaluation | Not ideal for measuring final performance | Essential for testing and benchmarking models |

In essence, training data enables learning, while ground truth ensures that what the model learns can be trusted and properly evaluated.

How Ground Truth Data Shapes Model Performance

Ground truth data provides the reliable standard that AI systems depend on throughout their lifecycle.

During training an AI model, it defines what correct outputs look like. During evaluation, it reveals whether a model can generalize beyond the examples it learned from. Without a trustworthy reference at both stages, teams have no way to measure real progress. This makes ground truth critical across the entire machine learning lifecycle.

Its quality directly affects accuracy and trust. Incorrect, inconsistent, or biased labels produce models that repeat those errors at scale. A computer vision system trained on loosely drawn bounding boxes, for example, may seem to perform well in testing but misclassify objects once deployed in a real environment.

Even a strong machine learning algorithm cannot compensate for weak ground truth, while high-quality labels make benchmarking meaningful and performance metrics actionable.

For businesses, the consequences go beyond model accuracy. Teams may spend weeks optimizing against flawed labels, burning engineering cycles on improvements that never materialize in production. In regulated industries like healthcare or finance, unreliable ground truth introduces compliance risk that compounds as systems scale.

Investing in strong ground truth up front leads to better models, clearer evaluation, and fewer costly corrections downstream.

Ground truth is good enough when the label still makes sense weeks later, after you change prompts, swap model versions, or expose the system to different user behavior. A failed strategy usually looks stable in dashboards and unstable in deployment. The root problem is often label definitions that were never stress-tested for durability.

How to Build Reliable Ground Truth Data

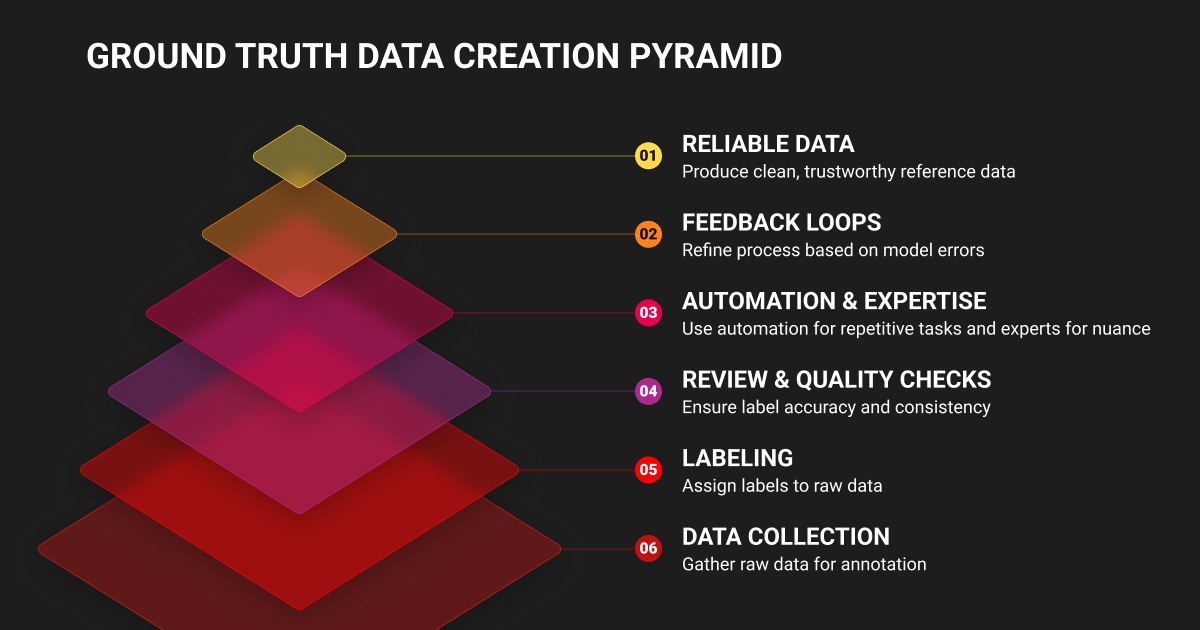

Building ground truth data for AI follows a repeatable pipeline: collect raw data, label it, review the labels, and run quality checks before anything reaches a model. In most workflows, a data annotation company combines domain experts, trained annotators, and automation that handles obvious or repetitive tasks.

Automated pre-labeling with data annotation tools reduces manual effort, while people manage nuance and edge cases. To keep annotations consistent, teams measure inter-annotator agreement, or how often different annotators make the same decision on the same example. When disagreement appears, an adjudication step resolves conflicts and updates the labeling rules.

For high-stakes domains like autonomous driving or medical imaging, many teams partner with ground truth data services that combine annotator expertise with structured QA processes.

This matters because high-quality ground truth depends more on consistency than speed. Strong teams also build feedback loops into the process. They review model errors, identify weak labels, refine instructions, and send difficult cases back for re-annotation. Over time, this turns machine learning datasets into improving systems rather than static deliverables.

The result is cleaner, more reliable reference data that supports both training and evaluation. Without that discipline, labels degrade, edge cases get missed, and model performance becomes harder to trust.

Where Is Ground Truth Data Used in Machine Learning?

The task a model performs determines what ground truth looks like and how teams need to produce it. Ground truth data varies by task because each model learns a different type of output:

- Classification: Assigns a single category to each example (e.g., spam vs. not spam, benign vs. malignant)

- Regression: Predicts continuous values (e.g., house price, temperature, time to failure)

- Segmentation: Labels fine-grained elements like pixels, tokens, or regions instead of entire items

These differences become even sharper across domains:

- NLP: Sentiment labels, named entities, summaries, and high-quality datasets used for tasks like fine-tuning small language models and improving domain-specific performance

- Speech Systems: Transcripts, speaker labels, phoneme boundaries

- Computer Vision: Bounding boxes, keypoints, masks, scene annotations

- Geospatial ML: Land-use classes, building footprints, GPS-verified coordinates

Context also matters. Healthcare often relies on specialized data annotation services to produce expert-reviewed labels, while autonomous systems require precise annotations for dynamic environments.

The main point is simple: the ML task determines what counts as correct and how labels should be produced. A binary classifier may need fast, consistent tagging at scale, while segmentation or medical imaging demands stricter rules, deeper expertise, and heavier quality control.

We consider ground truth good enough when the data reflects real customer behavior, the labeling is consistent enough that two reviewers would make the same call, and the dataset covers the situations the system will actually face in production. Failed strategies usually come from relying on synthetic examples or overly clean data that breaks the moment real inputs hit the model.

Common Challenges When Building Ground Truth

Defining ground truth data for AI may seem simple, but maintaining reliable labels becomes complex in real-world scenarios. Several challenges affect quality, consistency, and scalability:

- Ambiguity: Different annotators may assign different labels to the same data when guidelines are unclear.

- Bias: Labels can reflect limited perspectives from annotators or skewed datasets, leading to distorted outcomes.

- Cost and Scalability: High-quality annotation requires time, expertise, and resources, making it difficult to scale.

- Data Drift: Changing user behavior, environments, or products can make existing labels outdated over time.

- Privacy and Ethics: Sensitive domains like healthcare or finance involve strict regulations and higher risks if errors occur.

Ground truth is not a one-time task. It requires continuous review, clear standards, and strong quality control to remain reliable.

Given these challenges, building reliable ground truth requires a more structured and intentional approach, and a clear understanding of how factors like complexity, volume, and expertise shape data annotation pricing from the start.

Best Practices for High-Quality Ground Truth Data for AI

Building reliable ground truth machine learning requires a structured approach that ensures consistency, accuracy, and long-term usability. Key best practices include:

- Define Clear Labeling Guidelines: Provide detailed instructions, examples, and edge-case rules to ensure consistent annotations.

- Implement Quality Control Processes: Regularly audit samples and involve subject-matter experts for complex or high-stakes tasks.

- Use a Golden Dataset: Maintain a small, trusted benchmark to evaluate annotator accuracy and track model performance.

- Apply Version Control: Treat datasets like products by documenting changes, tracking updates, and maintaining dataset history.

- Adopt Human-in-the-Loop Systems: Combine automation with human review for ambiguous cases and continuous improvement.

- Monitor and Refresh Data: Continuously check for data drift and update labels to keep them relevant.

The goal is not just one-time labeling, but creating a repeatable system that maintains quality and trust over time.

Ground truth is good enough when it stops breaking in the same obvious places. The labels should be consistent enough that when the model fails, you can tell it is learning problem complexity, not label chaos. A failed strategy usually looks clean on paper and messy in production because nobody stress-tested the instructions against real inputs.

Making Ground Truth Data Work for Production AI

Ground truth data is the benchmark that keeps models, analytics, and automation honest. It gives teams a reliable reference for measuring accuracy, spotting drift, and improving decisions with confidence. Its value does not come from dataset size alone. It comes from having the right labels, validated against real-world conditions by people who understand the domain.

A small, well-verified dataset often outperforms a much larger, noisy one. That is why ground truth machine learning should not be treated as a one-time project. It needs review, re-labeling, and updates as products, users, and environments change. If you want dependable outputs, focus on building high-quality ground truth, validating it in context, and maintaining it continuously.

About Label Your Data

If you need accurate, consistent ground truth data, run a free pilot with Label Your Data. Our specialist annotation teams have helped many companies scale their ML projects. Here's why:

Check our performance based on a free trial

Pay per labeled object or per annotation hour

Working with every annotation tool, even your custom tools

Work with a data-certified vendor: PCI DSS Level 1, ISO:2700, GDPR, CCPA

FAQ

What is an example of ground truth data?

A common example is a set of medical images labeled by certified radiologists. The scan is the raw input, and the expert diagnosis becomes the ground truth used to train or evaluate a model.

What’s the difference between data and ground truth?

Data refers to any raw input a system processes, such as images, text, audio, or sensor readings. Ground truth is the verified label or outcome attached to that data, confirming what the correct answer should be. A photo of a street is data. The annotation marking each pedestrian, vehicle, and lane boundary in that photo is ground truth.

Is ground truth data always labeled by humans?

No. Humans often create the first labels, but ground truth can also come from direct measurement, controlled experiments, sensors, or verified system logs. What matters is how reliably the data reflects reality.

How much ground truth data is enough?

There is no fixed number. You need enough data to cover the real-world variation the model will face, including edge cases. A small, high-quality dataset is often more useful than a large, noisy one. In practice, enough means performance stabilizes during testing.

Can synthetic data replace ground truth?

Not fully. Synthetic data can expand coverage, balance classes, and reduce ground truth data collection costs, but it still needs real ground truth for validation. Without that anchor, you cannot tell whether synthetic examples reflect reality or simply reinforce assumptions.

What makes a ground truth dataset trustworthy?

A trustworthy ground truth dataset is accurate, consistent, well-documented, and representative of the task. It should come from credible sources, follow clear labeling rules, and include quality checks such as inter-annotator agreement or audit reviews. If the dataset is biased or outdated, the model will be too.

Written by